Blog

Why incrementality is harder than you think

Why incrementality is harder than you think

Nishant Sharma

Technical Director

14 min read

March 11, 2026

Nine incrementality challenges: Why tools built for dashboards break on Customer 360

1,000 new rows landed in your 10-million-row table overnight.

Did your pipeline scan all 10 million to find them? Or just the 0.01% that changed?

Your warehouse bill depends on the answer. So does your pipeline’s reliability as data volumes grow.

The challenge is that incremental computation is genuinely hard to get right. Not because the concept is complicated, but because "incremental" isn't one problem. It's several problems, and different tools solve different subsets of them. This matters more than ever as teams move beyond analytics dashboards to use cases like Customer 360 and AI-powered activation, where the requirements are fundamentally different.

This post explains why. It covers the foundational concept most comparisons skip (grain), then walks through the nine specific challenges that separate the three generations of incremental SQL primitives. By the end, you'll have a framework for picking the right tool for your use case, and you'll understand why Customer 360 and AI-era activation pipelines require something fundamentally different from the tools built for analytics dashboards.

Incrementality is not one problem

Every data team wants incremental processing. Scan less data, pay less compute, get faster results. The concept is simple enough. The reality is messier.

Incrementality breaks down into five distinct sub-problems:

- What's new: identifying the delta since the last run

- What's affected: knowing which downstream models need updating

- What's the merge logic: combining old state with new data correctly

- What if upstream changed: handling cascading invalidation when a dependency rebuilds

- What's the grain: are you computing per time window, per entity, or both?

Different tools solve different subsets of these problems. Understanding which problems each primitive actually addresses is what makes the difference between a pipeline that scales and one that quietly produces wrong data.

Grain is the last item, and it is foundational. It shapes everything else. So before getting into the tools, it’s worth being precise about what grain means.

What is grain, and why does it define everything?

Grain is the fundamental unit your computation operates on. It answers: “What’s the smallest thing I’m computing a result for?”

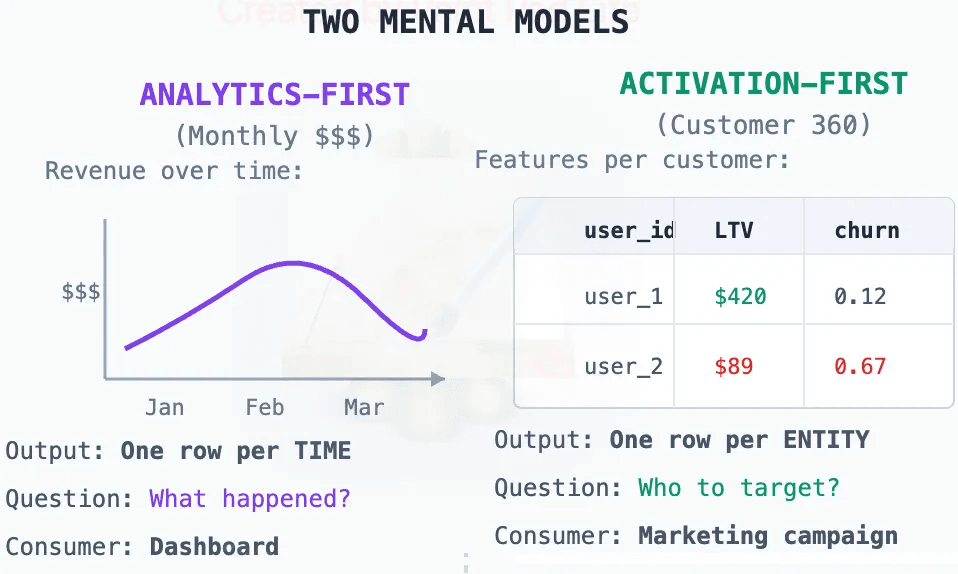

Two grains dominate data pipelines today: time grain and entity grain. They look similar on the surface but require completely different incremental strategies.

The diagram below shows the distinction clearly. Analytics-First pipelines operate on time grain, outputting one row per time window. The consumer is a dashboard asking "What happened?" Activation-First pipelines operate on entity grain, outputting one row per entity. The consumer is a campaign, a model, or an AI agent asking "Who to target?"

Why dbt chose time grain

dbt emerged from the analytics world. Analytics is dashboard-first, and dashboards are plot-first. Plots have a time axis.

When your mental model is “aggregations that become plots,” time is the natural grain. You ask: “What was the revenue yesterday?” “How many users were active this week?” “What’s the trend over the last 30 days?”

dbt’s incrementality follows directly from this assumption: new data arrives for new time windows. Process the new windows, append to the existing table, done.

Microbatch makes this explicit: You declare event_time and batch_size: day, and dbt processes one time window at a time.

For analytics dashboards, this is exactly the right design. It was the right choice for the problem dbt was built to solve. dbt's merge strategy with `unique_key` does allow updating existing rows (upserts), so it's not strictly append-only. But this is row-level deduplication, not entity-grained incrementality. It cannot detect which entities are missing from the table, and it cannot handle identity merges where two rows collapse into one.

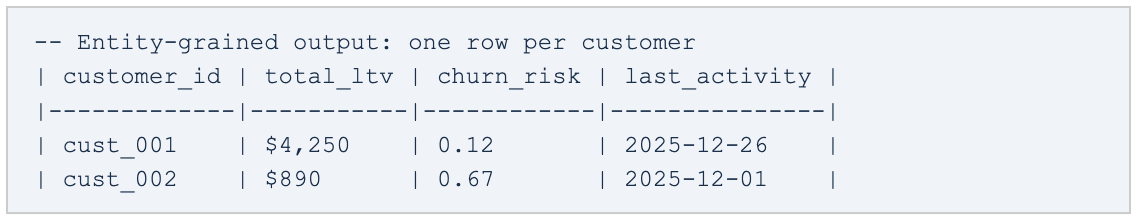

Why Customer 360 needs entity grain

Customer 360 is not plot-first. It’s activation-first.

The output is one row per customer, not one row per day. You’re not asking “What was the total revenue yesterday?” You’re asking: “What is this customer’s lifetime value?” “Which users are at churn risk?” “What cohort does this account belong to?”

Customer 360 is built on entity grain, and that changes how incrementality works. Unlike time-grained pipelines where new data is always additive, entity-grained pipelines have to account for three additional cases:

Incrementality for entity grain works differently in three important ways:

- New events arrive for existing entities → update their features

- New events create new entities → add them to the table

- Identity edges link two IDs → merge entities (entity count goes down)

That third point is the critical one. In time-grained systems, new data is additive. In entity-grained systems with identity stitching, new data can be subtractive: two entities become one.

This is not an edge case. It is the normal operation of any Customer 360 that resolves identities.

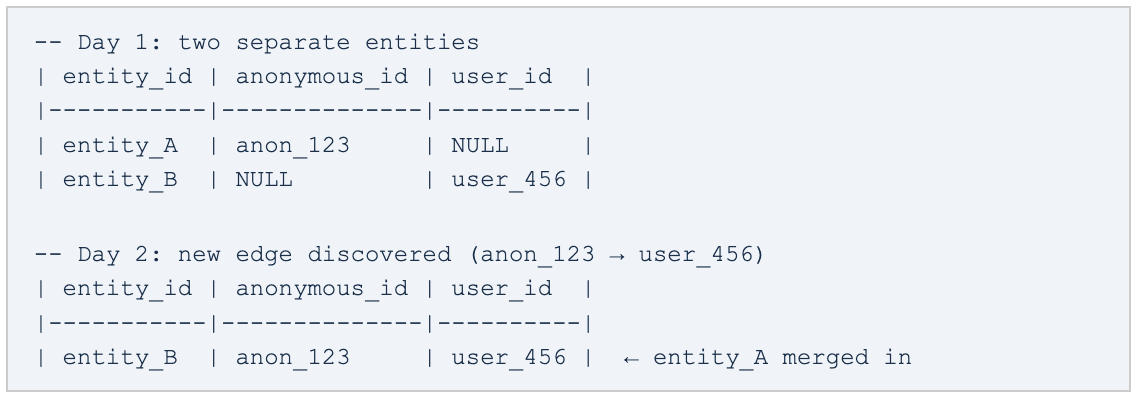

The identity graph complication

Every real-world customer data pipeline has to deal with fragmented identity. A user visits your site anonymously, then logs in. Now you have two records for the same person.

An identity graph resolves this by creating edges between identifiers. When a new edge is discovered, entities merge:

Time-grained incrementality cannot handle this correctly. It assumes rows are additive. Identity stitching means rows can collapse. The row count goes down, not up.

This is why identity stitching has to be a first-class concern in entity-grained pipelines. It cannot be an afterthought bolted on downstream.

Late-arriving data makes both grains harder

Late data complicates incrementality regardless of grain, but in different ways.

For time grain: you compute “revenue for December 25th” on December 26th. On December 28th, a batch of mobile events with December 25th timestamps arrives (offline sync). Your December 25th aggregation is now wrong.

For entity grain: you compute user_456’s LTV on December 26th. On December 28th, a late event reveals they made a purchase on December 25th. LTV is now understated.

For identity-stitched entity grain: you computed features for entity_A and entity_B separately. On December 28th, a late event reveals they’re the same person. You need to merge features, not just update them.

Each generation of incremental primitives handles these cases differently. The challenges section below maps which tools address which problems.

The nine challenges of incremental SQL

With grain established, here is the full picture: nine specific challenges that any incremental pipeline must eventually confront, and how each generation of primitives addresses them.

| Challenge | is_incremental() | Microbatch / Intervals | this.DeRef() | Notes |

|---|---|---|---|---|

1. Delta computation | Manual | Automatic | Automatic | You write the WHERE clause in Gen 1 |

2. Merge logic | Manual | Automatic | Automatic | UNION/MERGE written by hand in Gen 1 |

3. Cascading invalidation | X | ✔️ | ✔️ | Profiles trackes model hashes; SQLMesh has aprtial version tracking |

4. New entity discovery | X | X | ✔️ | Time-based tools don't track entity gaps |

5. Late-arriving data | Manual | ✔️ (lookback) | ✔️ | dbt Microbatch lookback reprocesses last N windows |

6. Multi-baseline comparisons | X | X | ✔️ | Names checkpoints (daily/weekly/monthly) in Profiles |

7. Conditional dependency chains | X | X | ✔️ | Profiles .Except() creates negative prerequisites |

8. Model invalidation | X | ✔️ (conditional) | ✔️ | Definition change propagation across the graph |

9. Entity-grained incrementality | X | X | ✔️ | Core to C360; irrelevant to time-grained analytics |

Important notes on the challenges in the table above:

- Delta computation: With

is_incremental(), you writeWHERE timestamp > (SELECT MAX(timestamp) FROM {{ this }})yourself. This is error-prone and repeated in every model. - Merge logic: You write

UNION ALLorMERGEstatements manually. Easy to get wrong on deduplication, ordering, and nulls. - Cascading invalidation: If an upstream model rebuilds, downstream incremental logic uses stale assumptions. No error, no warning. Just silently wrong data. SQLMesh has conditional cascading. It classifies changes as breaking(full downstream rebuild) or non-breaking (no cascade). Profiles has unconditional cascading via model hashes and Enable Status convergence

- New entity discovery: Users who signed up after the last feature run are not in the feature table. Time-based incrementality does not see this. It processes time windows, not entity gaps.

- Late-arriving data: Events that land after the time window closed. dbt Microbatch’s lookback parameter reprocesses the last N time windows on each run. This works for time grain; entity grain requires a different approach.

- Multi-baseline comparisons: Comparing today vs last week vs last month in the same pipeline. dbt only has

{{ this }} (last run). Profiles’ this.DeRef()with named checkpoints enables comparisons against arbitrary named states (daily, weekly, monthly). - Conditional dependency chains: “Only run this model if X exists.” dbt cannot express this; dependencies are static.

Profiles’ .Except()creates negative prerequisites, enabling conditional DAG semantics. - Model invalidation: When a model’s definition changes, how does that propagate to downstream models? Profiles tracks model hashes; upstream changes automatically invalidate downstream incremental assumptions.

- Entity-grained incrementality: Time-grained tools process new time windows; they have no concept of which entities are missing or need updating. Entity-grain requires tracking state per customer, account, or user, including handling identity merges where row counts decrease rather than increase.

Why these challenges are the norm, not the exception

These aren’t theoretical edge cases. They are patterns that any pipeline operating at scale with real-world data messiness will eventually hit. Three examples from Customer 360 practice:

Cascading invalidation in an identity rebuild

A team rebuilds their identity graph after a schema change. All downstream feature models continue running incrementally, but their incremental logic is now based on stale identity mappings. No errors surface. Wrong data flows into activation campaigns for a week before anyone notices.

Root cause: downstream models had no way to know the upstream identity graph had been rebuilt.

New entity discovery gap

A weekly feature run completes on Sunday. Users who sign up Monday through Saturday are not in the feature table until the following Sunday run. The marketing team finds that “new users” consistently have null LTV scores.

Root cause: time-based incrementality does not track which entities are missing. It processes time windows, not entity gaps.

Systematic error from late mobile data

Mobile events arrive 24 to 48 hours late due to offline sync. Daily aggregations are always missing the previous day’s mobile data. Metrics dashboards are systematically wrong. Not by a lot, but consistently and silently.

Root cause: no automatic handling of late-arriving data for time-grained outputs in the pipeline.

The insight: These aren’t failures of implementation. They’re failures of the wrong primitive for the use case. Tools that don’t address these challenges push the complexity to the engineer, who often doesn’t discover the problem until it’s compounded downstream.

Three generations of incremental primitives

The nine challenges above map to three generations of primitives"Generation" here refers to when the primitive emerged, not a ranking. Gen 2 and Gen 3 are orthogonal—they solve problems on different axes, not successive versions of the same solution. It's worth understanding what each generation does and where it stops.

Gen 1: is_incremental() and the boolean question

Era: dbt (2016 to present).

Core idea: incrementality is a yes/no property of a single model.

is_incremental() is the most widely used incremental primitive. It is elegantly simple, and the simplicity is intentional: It gives you a handle to the existing table, lets you write any logic you want, and distinguishes first run from incremental run.

What it solves:

✓ Distinguishes first run from incremental run

✓ Gives you a handle to the existing table ({{ this }})

✓ Maximum flexibility. You write all the logic.

What it doesn’t solve:

✗ Delta computation: you write the WHERE clause manually, in every model

✗ Merge logic: you write the UNION or MERGE manually

✗ Cascading invalidation: if upstream rebuilds, your incremental logic may be wrong

✗ Named checkpoints: only one reference point, the last run

✗ Cross-model awareness: no knowledge of other models’ states

The cascading invalidation gap is worth dwelling on. Model B depends on Model A incrementally. Model A gets a full refresh (schema change, backfill, bug fix). Model B runs next: its is_incremental() returns true, so it appends only “new” rows against the stale table. No error. No warning. Just wrong data downstream.

Teams working with is_incremental() at scale develop workarounds: defensive runtime queries, variable flags, manual coordination. They work, but they’re brittle. The fundamental issue is that is_incremental() treats incrementality as a property of a single model, not of the dependency graph.

Gen 2: Microbatch and Intervals, with time as a first-class citizen

Era: SQLMesh (2022-), dbt Microbatch (2024).

Core idea: time windows are the unit of computation.

Gen 2 primitives understand time semantically. Instead of “Am I running incrementally?” they ask “Which time windows need processing?”

dbt Microbatch:

SQL

SQLMesh Intervals:

SQL

SQLMesh goes further with Virtual Data Environments: promoting code to production is a view swap, not a table rebuild. Bottom line: SQLMesh is more architecturally sophisticated for time-interval handling. dbt Microbatch brings dbt closer to parity, but within dbt’s existing model. The right choice depends on your team’s existing investment.

What Gen 2 solves:

✓ Automatic time partitioning: the framework splits data into windows

✓ Parallel processing: multiple time windows can run concurrently

✓ Idempotent reruns: reprocess “yesterday” without touching “last week”

✓ Late data handling: built-in lookback mechanisms

✓ Gap detection: SQLMesh knows which intervals are missing

What Gen 2 doesn’t solve:

✗ Named checkpoints: still just time windows, not semantic reference points

✗ Cross-model state dependencies: no concept of “run this only if that model’s checkpoint exists”

✗ Conditional DAG: dependencies are static, not conditional on state

✗ Entity-grained incrementality: designed for time-series, not Customer 360

The key distinction

Gen 2 primitives are optimized for time-grained outputs (daily revenue, hourly DAU, event aggregations by time window). They are the right tool for analytics dashboards. But Microbatch doesn’t apply to Customer 360 because C360 output is entity-grained, not time-grained. The “batch” in C360 is “new events affecting entities” or “new identity edges,” not “new time windows.”

Two dimensions, not one evolution

Most comparisons of these tools treat Gen 1, Gen 2, and Gen 3 as a linear progression. That’s not quite right. Gen 2 and Gen 3 are orthogonal; they solve problems on different axes.

| Single-model incrementality | |

|---|---|

Boolean only | is_incremental() |

Time-aware | Microbatch / Intervals |

State-aware (entity + graph) | this.DefRef() (Gen 3) |

Gen 2 improves single-model time-aware processing. Gen 3 adds graph-level state management and entity-grain support. They operate on different axes. You can use both, and for many teams you will.

Output grain is what determines which tools apply to your problem. If your output grain is time (daily revenue, hourly events), Gen 2 is likely your answer. If your output grain is entity (customer features, LTV, cohorts), you need Gen 3.

When to use which: A practical guide

| Primitive | Use when/avoid when |

|---|---|

Gen 1: is_incremental() | Use: you want full control, your pipeline is simple, you’re already expert at dbt patterns.Avoid: you have complex dependency chains or need cross-model state awareness. |

Gen 2: Microbatch / Intervals | Use: output is time-grained (analytics dashboards), you need parallel batch processing, automatic gap detection.Avoid: output is entity-grained (C360) or you need conditional DAG semantics. |

Gen 3: this.DeRef() | Use: building C360 / entity-centric models, need named checkpoints, have complex incremental dependencies, require graph-level cascading invalidation.Avoid: you need real-time assembly (this is batch), or you prefer full SQL control over declarative abstraction. |

The bottom line

Incremental SQL is not one primitive. It’s a family of primitives at different levels of capability, designed for different output grains and different problem surfaces.

For analytics (time-grained dashboards, event aggregations, trend reporting), Gen 2 (Microbatch or SQLMesh Intervals) is likely sufficient. These tools were built for exactly this use case and they do it well.

For activation (Customer 360, identity stitching, entity features for AI), you need graph-level state management. That means tracking what changed across the dependency graph, resolving identities as a first-class concern, and handling the subtractive nature of entity merges. That’s what Gen 3 (this.DeRef()) was built for.

The tool you pick encodes assumptions about your grain. Picking the wrong one doesn't cause immediate failures. It causes the quiet, compounding kind: stale features, missing entities, systematically wrong metrics. The nine challenges in this post are a map to those failure modes.

Part 2 of this series will dive deeper into the architectural implications: why AI agents need something more fundamental than better metadata, and how the Semantic Intent Compiler changes the agent's output target, not just its inputs.

If you're building pipelines that power Customer 360 or AI-driven activation, the tool you pick encodes assumptions about your data. Pick a time-grained tool for an entity-grained problem and you won't get immediate failures. You'll get silent ones: stale features, missing entities, systematically wrong scores flowing into campaigns and models.

The nine challenges in this post are a map to those failure modes. The fix isn't more complex SQL. It's the right primitive for the grain. For activation use cases, that means graph-level state management, identity stitching as a first-class concern, and named checkpoints across the dependency graph.

That's what this.DeRef() was built for. Explore the RudderStack Profiles documentation to see it in practice.

Published:

March 11, 2026

More blog posts

Explore all blog posts

Understanding event data: The foundation of your customer journey

Danika Rockett

by Danika Rockett

How AI data integration transforms your data stack

Brooks Patterson

by Brooks Patterson

Behavioral segmentation: Examples, benefits, and tools

Brooks Patterson

by Brooks Patterson

Start delivering business value faster

Implement RudderStack and start driving measurable business results in less than 90 days.